No, Block did not fire 4000 people because of AI

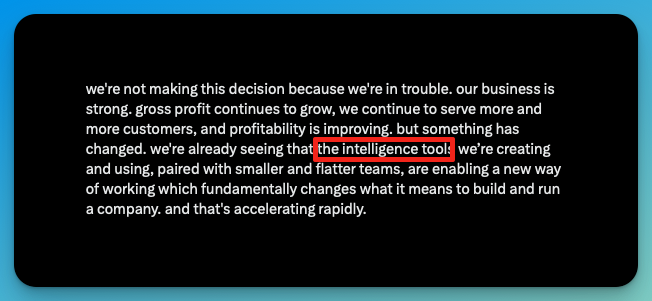

Yesterday, Jack Dorsey announced that Block is cutting 4,000 employees — roughly 40% of its workforce. The reason, according to his shareholder letter? "Intelligence tools."

I think this is an exceptional example of AI Washing and I would like to explain why.

In the letter, Dorsey wrote that "a significantly smaller team, using the tools we're building, can do more and do it better" and that "intelligence tool capabilities are compounding faster every week." Obviously, this is a pretty clear reference to AI efficiencies, not some alien technology.

He predicted that within a year, most companies will reach the same conclusion and make similar structural changes, using the same AI efficiency narrative. I would like to explain as to why I am sceptical about this and how this is a strategy that has little to do with technology and more with financial engineering.

Market and media reaction

The stock jumped 24% in after-hours trading – it is obvious that Wall Street loved it, as they always do when news of profitable companies firing people comes out.

Block reported Q4 2025 earnings of $0.65 adjusted EPS on $6.25B revenue, matching estimates. Gross profit was up 24% year-over-year to $2.87B for the quarter. Full-year 2025 gross profit came in at $10.36 billion, with guidance of $12.2B for 2026. This is not a company in financial distress. Dorsey said as much himself: "Our business is strong."

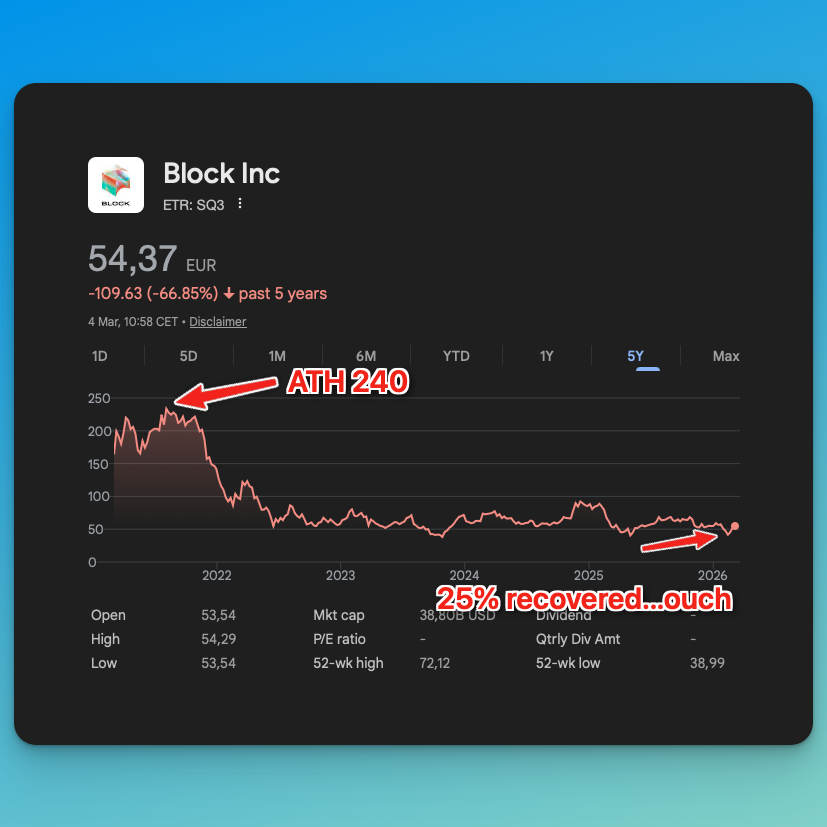

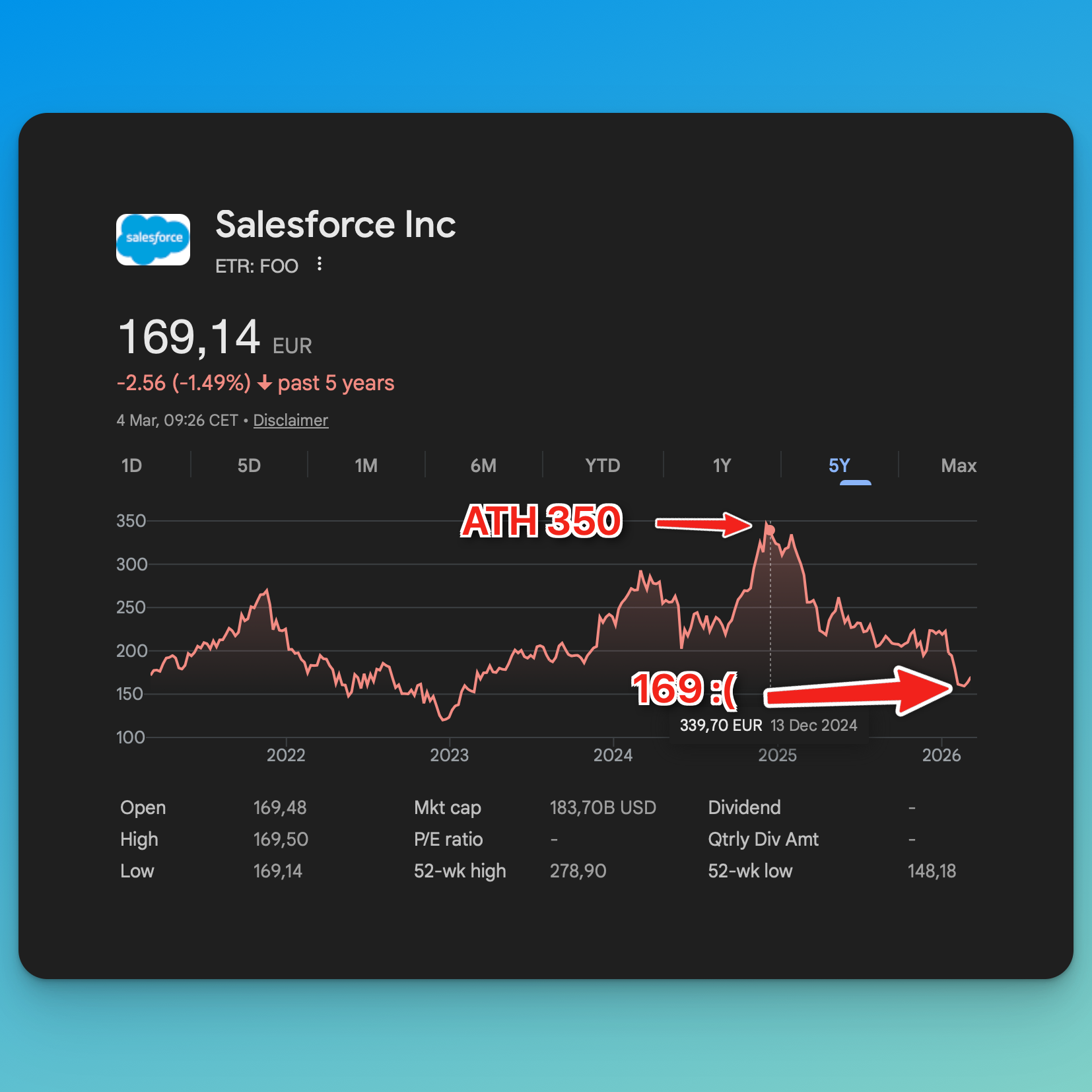

Now, what most media outlet praising the decision forgets to mention is that the stock is still what - 75% down from the ATH ($240) values back in 2021, so they still have quite a lot of convincing to do, but there is a common trend among companies that make these AI-based announcements – they either have declining stock prices (Salesforce) or recently lost a huge chunk of their valuation before an IPO (Klarna, who I have written about extensively). But more about this later.

LinkedIn AI influencers almost exploded in seeing the news and wasted no time generating tons of AI-slop to praise the decision-making ability and warn us of the impending doom that AGI will bring to white-collar work.

But let’s do some basic fact checking on these AI claims.

The AI efficiency story does not hold up

So, everything I will write in the next paragraph is from the perspective of someone actively working with AI daily. Coding, basic writing, call summaries, research, automation – as a one-man company, I do it all, and I am heavily motivated to automate anything I can. So, trust me when I say that we are not there yet. At least not in a state where AI can take over the work of 4K people without causing massive pain for the rest of the organization.

This has a name. It is called "AI washing" — using AI as a convenient narrative for layoffs that would have happened regardless.

Even Sam Altman — the CEO of OpenAI — acknowledged this just last week at the India AI Impact Summit, stating that "there's some AI washing where people are blaming AI for layoffs that they would otherwise do." When the CEO of the company selling AI says other CEOs are using his product as a scapegoat, maybe listen.

I wrote about this exact pattern a year ago when I looked at the AI washing OG - Klarna's AI claims. Klarna's CEO boasted about AI replacing 500 customer support agents, deprecating Workday, and stopping all hiring. When journalists actually investigated, the customer support team had been outsourced (not automated), Workday was replaced by Deel and Teamtailor (not AI), and the company still had dozens of open positions. The whole narrative was built to support an IPO story. Block's narrative has the same DNA.

Let's look at what the research actually says on AI productivity gains:

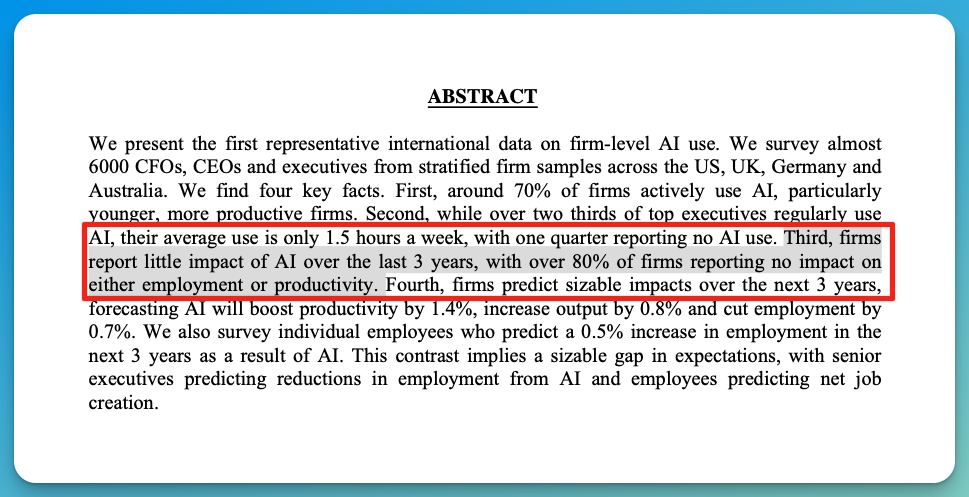

A study published last month by the National Bureau of Economic Research, surveying 6,000 executives across the US, UK, Germany, and Australia, found that nearly 90% said AI had no impact on workplace employment over the past three years, with 89% reporting no productivity boost either. This is despite 70% of these companies actively using AI.

Let me repeat that: the majority of companies that adopted AI have not yet seen any productivity boost.

This is not the first study – there have been countless released in the past 24 months – I am just quoting the latest one to avoid being blamed for “not quoting the latest data” or “adoption” not happening.

Although adoption has not happened yet for almost 4 years now, what is this saying?

It is saying that companies are firing people on the BET that AI will get there. Not my word, it is actually HBR that said this:

The Harvard Business Review published research in January 2026 with a conclusion that should make everyone uncomfortable: companies are laying people off because of AI's potential, not its actual performance.

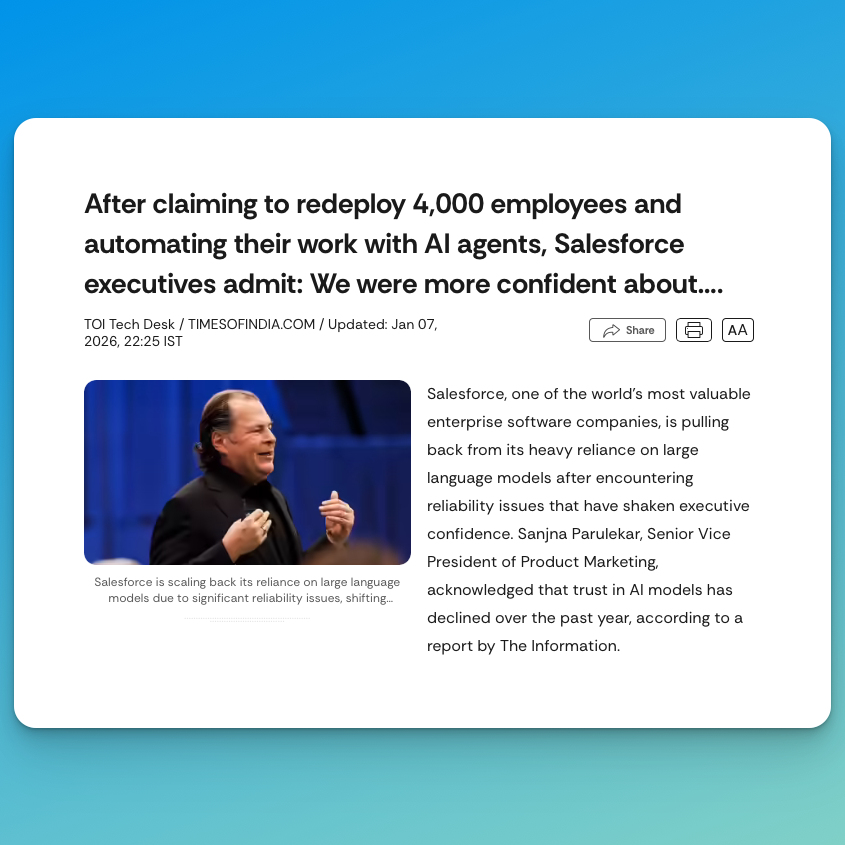

This is what happened to Salesforce last year – another example of a big tech company with a declining stock performance:

...that made brave statements about automating work with AI:

Marc Benioff, CEO of Salesforce: “We need 4000 heads less due to AI”

Just for a few months later his management team to have to say that “we were more confident about LLMs a year ago”. Let’s look at some of the issues they reported:

- When models received more than eight instructions, they started skipping steps

- When users asked off-topic questions, agents experienced "drift" and never returned to the task

- Hallucinations in production eroded customer trust

- In customer-facing roles, AI agents sometimes provided technically correct but contextually inappropriate responses, requiring human intervention.

Or in summary:

Instead of replacing work, AI often shifted it — staff were required to monitor outputs, correct mistakes, and manage edge cases, adding layers of oversight that leadership had not fully anticipated.

They are now pivoting back to “deterministic triggers” – translation: if-then statements - to make sure the software does what it is supposed to.

The core takeaway is pretty much a textbook case of what I have written about repeatedly — LLMs perform well in demos but struggle at enterprise scale without strict guardrails, and the "AI will just handle it" assumption consistently underestimates the extent to which human judgment and contextual awareness are baked into knowledge work.

What about AI-driven efficiencies in Software Engineering?

Fair question. If there is one area where AI productivity gains should be real, it is coding. I use Claude Code and Codex in all my projects and work — I know these tools save time. A Microsoft/Accenture study across nearly 5,000 developers found a 26% increase in completed pull requests when using AI tools.

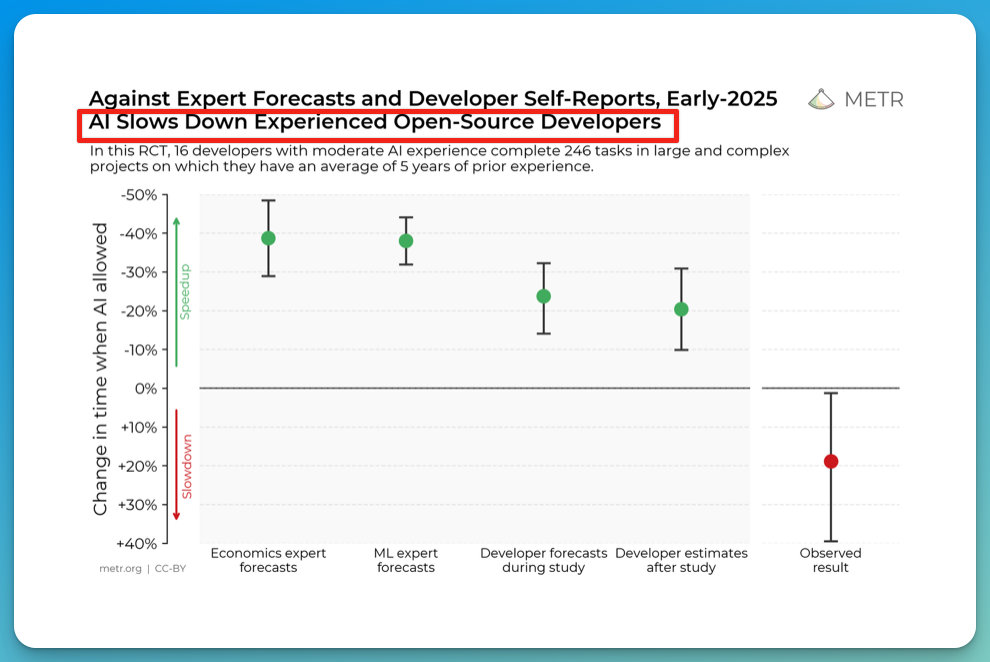

But even this is more nuanced than the headlines suggest. METR published a randomized controlled trial — arguably the most rigorous study on AI and developer productivity to date — that found experienced developers using Cursor Pro and Claude 3.5/3.7 Sonnet were actually 19% slower with AI tools. The developers themselves estimated they were 20% faster:

Their own perception was wrong.

Let that sink in. They felt they were productive when they were fast, but they were slower by the same margin. So, a 40% discrepancy.

METR updated the study just this week and noted that recruitment for their follow-up experiment is now difficult because developers refuse to work without AI, which tells you something about adoption but complicates the productivity claims.

Adoption is there, productivity is not.

The broader research by the University of Chicago's Anders Humlum, which examined 25,000 workers across 7,000 Danish workplaces, found a modest 3% productivity improvement from AI.

So even in software engineering — the strongest case for AI productivity — the picture is complicated, and the gains are far from the 50%+ that would justify halving a company's workforce. Now extend that to customer support, compliance, operations, HR, marketing, finance, and legal at a fintech company.

As I argued in my post on the real challenges of integrating generative AI in business, the fundamental issue is that LLMs hallucinate, and for any serious business process — especially in financial services — you cannot just hand tasks to an AI and walk away. You need guardrails, validation layers, and human oversight. This is expensive and slow to build, and it does not result in cutting half your workforce overnight.

Where are these efficiencies coming from? What are these intelligence tools? Did Jack download some new AGI in one of his shaman-guided time-travel sessions?

Not really, bear with me for a few more minutes, we are almost there.

What is actually happening: overhiring, bloat, and a Weak Labor Market

Now let's talk about what Dorsey said on X after the announcement — because this is where his own words undercut the AI narrative.

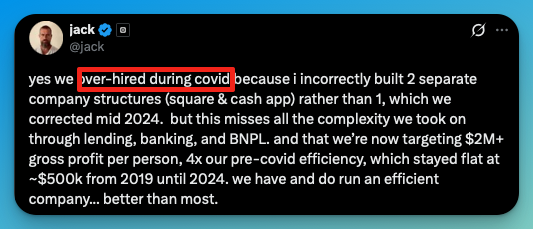

He wrote: "Yes, we over-hired during COVID because I incorrectly built 2 separate company structures (Square & Cash App) rather than 1, which we corrected mid 2024." He also cited the complexity Block took on through lending, banking, and BNPL

This is not the first time Jack Dorsey has built a bloated company and watched it get slashed in half. Twitter had around 7,500 employees when Musk took it over in late 2022. Dorsey later admitted that he had grown the company too quickly and that Twitter "could not have survived as a public company."

Musk cut the workforce to roughly 1,300 — an 80% reduction – which, honestly, sounds realistic and for all the hate he gets, X is still working after the initial hiccups.

What is not mentioned enough is that Jack rolled his ~2.4% Twitter equity stake into Musk's takeover rather than taking cash — so he watched Musk's playbook from the front row and apparently took notes – you can cut off a large percentage of employees from a bloated company, and it will still work.

And I do believe the bloat. Let's look at some gems from the past:

@durbinmalonster Every one of my coworkers watching this I hope you’re happy

♬ original sound - Darby

Or this?

They sure look busy :)

At least Musk did not have to AI-wash it.

Then we have another tell: Jack’s target: “$2M+ gross profit per person, up from ~$500K that "stayed flat from 2019 until 2024."”

Let's do the math.

Block had 3,835 employees at the end of 2019 and over 12,400 by end of 2022 — nearly tripling headcount in three years. The stock has dropped by more than 75% from its peak over the past five years. Block guided $12.2 billion in gross profit for 2026.

With ~6,000 employees post-layoffs, that is $12.2B / 6,000 = roughly $2.03M per employee. The $2M target is achievable purely through the layoffs and projected gross profit growth.

No AI productivity miracle required - just the magic of division 😊

So, if there are no efficiency gains from magic AI and you remove 1/3 of a company, who is going to do the work?

I have the answer, and unfortunately, it is quite bad for the people who will stay in the company.

The people who stay will absorb the work, because they have no choice

Here is the part nobody is talking about. When you fire 40% of a company, the work does not disappear. Someone still has to do it. The remaining 6,000 employees will absorb the workload of 10,000 — not because AI made them more productive, but because they have no alternative.

The US labor market in early 2026 is brutal for white-collar workers. According to Indeed's latest labor market report, job openings per unemployed person dropped to 0.9 in December 2025 — below 1.0 for the first time since 2017, outside the pandemic.

White-collar employment has been shrinking since November 2022, even while GDP in those sectors has grown. The job market is, as Indeed economist Cory Stahle put it "sclerotic and frozen."

So, in other words, if you have a job, you hold on to it.

That is the playbook. Fire 40%, tell the survivors to use AI tools, and let the labor market do the rest. If you are one of the 6,000 remaining Block employees and your workload just doubled, what are you going to do? Quit into a market where

- Job openings per unemployed person are below 1.0?

- Where has white-collar employment been shrinking for three years?

- Where does the hiring process take months?

All of the people with families, mortgages to pay, stocks vested – they don’t have a choice but to stay and quietly subdue.

In short, you are going to eat it. And the company knows that.

And we already have a slight preview on what this means on stress levels for these people:

Researchers at UC Berkeley recently studied the exact AI-adoption dynamic within a 200-person tech firm. They found that employees using AI tools did increase their output — but they also took on significantly more work, not because they wanted to, but because AI made it easy to start tasks, and the implicit expectation was that they should. As one worker told the researchers: "You had thought that maybe, 'Oh, because you could be more productive with AI, then you save some time, you can work less.' But then, really, you don't work less."

If the labor market were tight — like it was in 2021-2022 — no CEO would dare fire 40% of the workforce for some abstract AI efficiency pitch.

The workers would leave for competitors. The power dynamic has flipped, and AI is the perfect excuse to pull these kinds of stunts.

What about AI then

That is not to say that AI will not be force-fed into folks' daily lives. I have written on LinkedIn about companies that gave prizes for the best essays on AI adoption (how absurd is that). You can be sure that AI adoption will be rushed everywhere, because people will see no other way around it – you either do it, sing productivity chants with your senior management, or you go crazy from the workload.

A lot of these pilots will be crappy and will actually end up causing more work. LLMs are still fundamentally slot machines – sometimes they work, sometimes they do not. This is a hypothesis I have been having for a while.

Many companies and vendors will make large bets in 2026 that models will solve hallucinations.

Maybe they thought it was RAG (which was extremely oversold as a wonder solution to all issues of LLMS)

Maybe they think it will be a technology improvement (although anyone with a decent understanding of the space knows that the transformer architecture or scaling won’t solve hallucinations.

Of course, this will not happen.

So, what options are you left with as a manager if your AI pilot fails, but you have already invested a ton of money in it?

- Lie to senior management/shareholders and deploy some half-working stuff

- Take a huge loss and most likely lose your job.

We both know how human agency works, so option 2 is highly unlikely.

Prepare for option 1, or my large bet that life will be a bit worse in 2026 and 2027 with all of these half-baked solutions.

Check some funny videos of Chinese AI-powered self-driving delivery trucks failing miserably if you need more evidence of where things are going.

What does this mean for the global online recruiting industry?

For those of us in the recruitment industry, the implications are worth watching. More layoffs mean more job seekers entering an already saturated market. More competition per job posting. More opportunity for exactly the B2C monetization trend for job boards we have been tracking, and I have written about extensively.

This will lead to a further explosion of AI-applied tools, further pressure on ATS application forms, and an influx of unqualified applications.

At the same time, if companies are genuinely trimming headcount and not backfilling, that means fewer job postings on the B2B side. This is a double squeeze for job boards: more candidates, fewer jobs.

We will have to start rebuilding the rails of online recruiting because what we have will NOT work in this new world.

My final take on Block and their AI Washing

Block did not fire 4,000 people because of AI. Block fired 4,000 people because — in Dorsey's own words — he over-hired during COVID, built a bloated dual-company structure, and is now correcting it. The narrative aligns perfectly with a financial engineering goal of reaching $2m in revenue per employee.

This is the same CEO who grew Twitter into a bloated organization, publicly apologized for it, and then watched Musk gut it from the front row.

He learned from “the best,” and this pattern is not new. Only the excuse is AI this time, when Musk was blunt enough to say everything the right way – a poorly managed company.

AI is the narrative wrapper. It sounds better than "we grew too fast and need to correct because I am a shitty CEO”.

The people who lost their jobs last week are walking into the worst white-collar job market in years. The people who kept their jobs are going to absorb the work of their former colleagues — not because AI made them significantly more efficient, but because they cannot afford to leave.

This narrative will repeat in the next few years, and I am afraid it will have dire consequences on so many levels in our daily lives until either it stops and we usher into a new age of hiring, or if AI finally solves the current issues, we will have huge massive unemployment.